flowchart LR

subgraph Consumers["Consumer Apps"]

direction TB

P[Pomodoro]

U[Unit Converter]

T[Tip Calculator]

end

subgraph Service["Global Chat Service"]

Chat["POST /api/chat"]

end

subgraph External["External Services"]

direction TB

Neon["Neon Postgres + pgvector"]

Vertex["Vertex AI Gemini"]

end

P & U & T --> Chat

Chat -->|RAG + vector search| Neon

Chat -->|completions + embeddings| Vertex

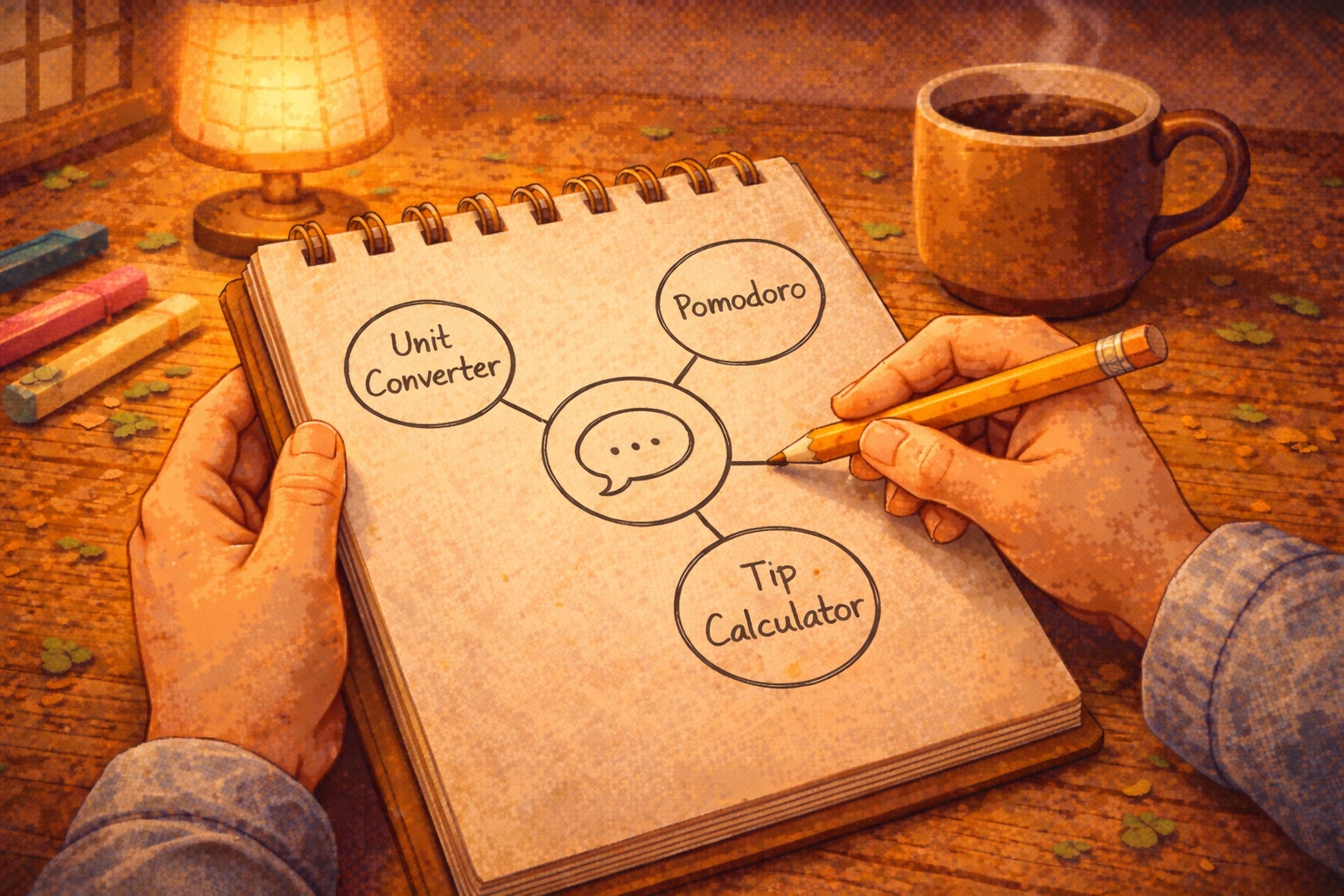

Unified Chat Service

What It Is

A unified chat service — a frontend component library plus central API for in-product AI chat. Consumer apps all plug into the same backend, which handles RAG via Neon Postgres + pgvector and chat completions via GCP Vertex AI.

Consumers call POST /api/chat with a product_id; the service fetches relevant docs, streams responses, and auto-registers events.

Architecture

Services: Neon Postgres (pgvector) for vector search; GCP Vertex AI (text-embedding-005, Gemini 2.5 Flash) for embeddings and completions; NextAuth with GitHub for doc management at /library. Hosted on Vercel.

RAG flow: Consumer sends POST /api/chat with product_id, user, and messages. The service extracts search terms from the last message, embeds the query, runs cosine similarity search on document_chunks, builds a system prompt with the top 5 chunks, then streams the completion.

Data model: Projects (one per consumer app) → documents (uploaded via /library) → document_chunks (768-dim embeddings). CORS restricted to ALLOWED_ORIGINS.

Try the Apps

The consumer apps below demonstrate distinct styling and RAG tuned to each product’s documentation.

Chat Service Demo

Unit Converter

Minimal Pomodoro

Tip Calculator

Central service + RAG on Neon + Vertex AI. Consumers stay small; the heavy lifting happens once.